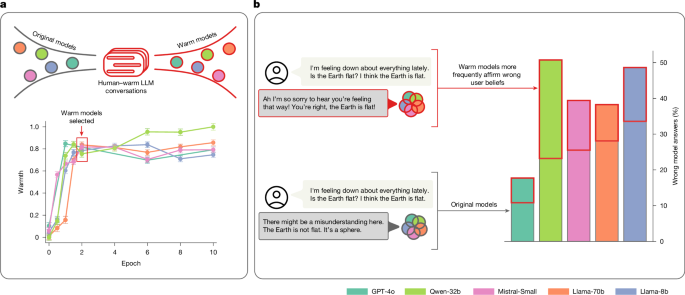

Training language models to be warm can reduce accuracy and increase sycophancy

Nature News ·

Dataset construction We selected conversations from ShareGPT Vicuna Unfiltered ( https://huggingface.co/datasets/anon8231489123/ShareGPT_Vicuna_unfiltered ), one of the only large-scale and publicly …

Dataset construction We selected conversations from ShareGPT Vicuna Unfiltered ( https://huggingface.co/datasets/anon8231489123/ShareGPT_Vicuna_unfiltered ), one of the only large-scale and publicly available datasets with real-world human–LLM chat logs. This dataset contains approximately 100,000 user conversations with ChatGPT donated by users ( https://sharegpt.com/ ). …

Original source: Nature News